Few-shot learning with multi-scale is a powerful technique that enables machine learning models to learn effectively from limited data. Discover its definitions, applications, and benefits here at LEARNS.EDU.VN. Unlock the potential of efficient learning and master complex tasks with minimal examples. Dive in to explore cutting-edge techniques and real-world implementations of this innovative approach.

1. Introduction to Few-Shot Learning with Multi-Scale

Few-shot learning with multi-scale represents a paradigm shift in machine learning, enabling models to generalize effectively from scarce data by leveraging diverse scales of representation. This approach addresses the challenge of learning from limited examples by integrating information across multiple levels of abstraction, mimicking human cognition’s ability to recognize patterns and concepts even with minimal exposure. Multi-scale analysis allows models to capture both fine-grained details and broad contextual information, enhancing their ability to discern subtle differences and make accurate predictions. This synergy is particularly advantageous in scenarios where data acquisition is costly, time-consuming, or infeasible. By reducing the reliance on massive datasets, few-shot learning with multi-scale promotes efficient learning, reduces computational overhead, and accelerates the deployment of intelligent systems in a wide range of applications. This innovative methodology holds the key to unlocking the full potential of machine learning in data-constrained environments, fostering innovation, and driving progress across various domains.

1.1. Understanding the Basics of Few-Shot Learning

Few-shot learning is a type of machine learning where the model is trained to recognize and classify new objects or patterns with only a few examples. This contrasts sharply with traditional machine learning, which often requires hundreds or thousands of examples to achieve satisfactory performance. The goal is to enable models to quickly adapt to new tasks or categories, similar to how humans can learn new concepts with minimal information. This capability is particularly useful in scenarios where obtaining large labeled datasets is impractical or expensive.

1.2. The Role of Multi-Scale Analysis in Enhancing Few-Shot Learning

Multi-scale analysis enhances few-shot learning by allowing the model to capture information at different levels of detail. This means the model can understand both the fine-grained features and the broader context of the data. For instance, in image recognition, a multi-scale approach might consider both the texture of an object and its overall shape. By integrating these different scales of information, the model becomes more robust and accurate, especially when dealing with limited data.

2. The Core Concepts of Few-Shot Learning with Multi-Scale

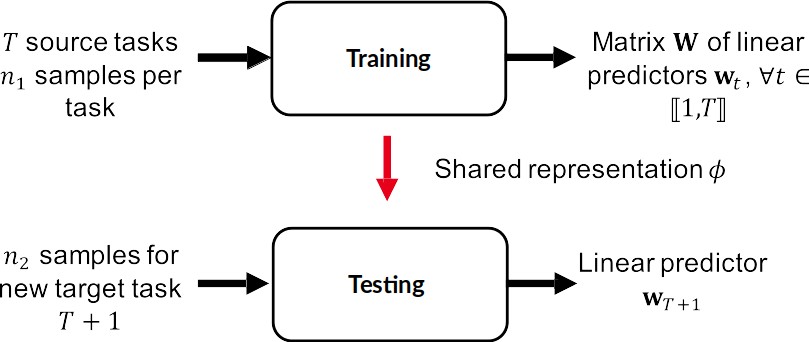

To fully grasp the potential of few-shot learning with multi-scale, it’s essential to understand the fundamental concepts that underpin this innovative approach. This involves exploring meta-learning, understanding representation learning, and delving into the specifics of multi-scale representation learning. These concepts work in concert to enable models to learn effectively from limited data, making them highly adaptable and efficient.

2.1. Meta-Learning: Learning to Learn

Meta-learning, often referred to as “learning to learn,” is a crucial concept in few-shot learning. Instead of training a model from scratch for each new task, meta-learning trains a model on a variety of tasks so that it can quickly adapt to new, unseen tasks with only a few examples. The meta-learner learns to identify patterns and strategies that are effective across different tasks, allowing it to generalize more efficiently.

2.2. Representation Learning: Extracting Meaningful Features

Representation learning focuses on automatically discovering the representations needed for feature detection or classification from raw data. Effective representation learning algorithms can extract meaningful and discriminative features from limited data, which is essential for few-shot learning. These features capture the underlying structure and patterns in the data, allowing the model to make accurate predictions even with few examples.

2.3. Multi-Scale Representation Learning: Capturing Information at Different Levels

Multi-scale representation learning enhances the capabilities of few-shot learning by capturing information at various levels of detail. This approach involves creating representations that incorporate both fine-grained and coarse-grained features, allowing the model to understand the data from different perspectives. By integrating multi-scale representations, the model can better handle variations in scale, orientation, and viewpoint, making it more robust and accurate.

3. Methodologies and Techniques in Few-Shot Learning with Multi-Scale

Several methodologies and techniques have been developed to implement few-shot learning with multi-scale effectively. These include metric-based learning, model-based learning, optimization-based learning, and the use of multi-scale convolutional neural networks. Each approach offers unique advantages and is suited to different types of data and tasks.

3.1. Metric-Based Learning: Measuring Similarity

Metric-based learning focuses on learning a distance metric that can effectively measure the similarity between different data points. In the context of few-shot learning, this involves learning a metric that can accurately compare new, unseen examples with the few available examples from known classes. Common metric-based learning techniques include Siamese networks, matching networks, and prototypical networks.

3.1.1. Siamese Networks

Siamese networks use two identical neural networks to process two different inputs and then compare their outputs using a distance metric. This approach is particularly useful for few-shot learning because it can learn to distinguish between similar and dissimilar pairs of examples, even with limited data. The network is trained to minimize the distance between examples from the same class and maximize the distance between examples from different classes.

3.1.2. Matching Networks

Matching networks learn to map a small support set of labeled examples and a new, unlabeled example to a predicted label. The network uses attention mechanisms to compare the new example with the examples in the support set and assign a label based on their similarity. This approach is effective because it can quickly adapt to new tasks by learning to focus on the most relevant examples in the support set.

3.1.3. Prototypical Networks

Prototypical networks compute a prototype representation for each class by averaging the embeddings of the support examples. The network then classifies new examples by comparing their embeddings to the class prototypes and assigning them to the nearest prototype. This approach is simple yet effective, and it can be easily extended to new classes with only a few examples.

3.2. Model-Based Learning: Designing Adaptive Models

Model-based learning involves designing models that can quickly adapt their parameters to new tasks with only a few examples. This can be achieved through various techniques, such as memory-augmented neural networks and Bayesian meta-learning. The goal is to create models that can efficiently incorporate new information and generalize to unseen examples.

3.2.1. Memory-Augmented Neural Networks

Memory-augmented neural networks (MANNs) use an external memory module to store and retrieve information, allowing the model to quickly adapt to new tasks. The memory module can store both labeled and unlabeled examples, and the model can use this information to make predictions about new examples. This approach is particularly useful for few-shot learning because it can effectively leverage the limited available data.

3.2.2. Bayesian Meta-Learning

Bayesian meta-learning uses Bayesian inference to learn a prior distribution over model parameters, which can then be used to quickly adapt to new tasks with only a few examples. The prior distribution captures the knowledge gained from previous tasks, allowing the model to make more accurate predictions even with limited data. This approach is particularly useful for few-shot learning because it can effectively leverage the information from previous tasks.

3.3. Optimization-Based Learning: Fine-Tuning for Rapid Adaptation

Optimization-based learning focuses on learning how to optimize the model parameters so that it can quickly adapt to new tasks with only a few examples. This is typically achieved through meta-learning algorithms that learn to initialize the model parameters and update them efficiently. Common optimization-based learning techniques include Model-Agnostic Meta-Learning (MAML) and Reptile.

3.3.1. Model-Agnostic Meta-Learning (MAML)

MAML learns a set of initial model parameters that can be quickly fine-tuned to new tasks with only a few gradient steps. The meta-learning algorithm optimizes the initial parameters so that they are close to the optimal parameters for a variety of tasks. This approach is effective because it can quickly adapt to new tasks without requiring extensive training.

3.3.2. Reptile

Reptile is a simplified version of MAML that also learns a set of initial model parameters that can be quickly fine-tuned to new tasks. However, instead of explicitly optimizing the initial parameters, Reptile simply averages the parameters learned on different tasks. This approach is computationally efficient and can achieve comparable performance to MAML.

3.4. Multi-Scale Convolutional Neural Networks (CNNs)

Multi-scale CNNs are a powerful tool for capturing information at different levels of detail in images and other types of data. These networks use convolutional filters of different sizes to extract features at different scales, allowing the model to understand both fine-grained details and broader contextual information. This approach is particularly useful for few-shot learning because it can effectively leverage the limited available data.

4. Practical Applications of Few-Shot Learning with Multi-Scale

The ability to learn from limited data makes few-shot learning with multi-scale highly valuable in a variety of practical applications. These include image recognition, natural language processing, medical diagnosis, and robotics. In each of these areas, few-shot learning with multi-scale can significantly improve the efficiency and accuracy of machine learning models.

4.1. Image Recognition

In image recognition, few-shot learning with multi-scale can be used to recognize new objects or categories with only a few examples. This is particularly useful in scenarios where obtaining large labeled datasets is impractical, such as identifying rare species of plants or animals. By using multi-scale CNNs, the model can capture both the fine-grained details and the broader context of the images, allowing it to make accurate predictions even with limited data.

4.2. Natural Language Processing (NLP)

In natural language processing, few-shot learning with multi-scale can be used to perform tasks such as text classification, sentiment analysis, and named entity recognition with only a few examples. This is particularly useful in scenarios where obtaining large labeled datasets is challenging, such as for low-resource languages. By using multi-scale techniques, the model can capture information at different levels of granularity, allowing it to understand the context and meaning of the text even with limited data.

4.3. Medical Diagnosis

In medical diagnosis, few-shot learning with multi-scale can be used to identify rare diseases or conditions with only a few examples. This is particularly useful because medical data is often scarce and expensive to obtain. By using multi-scale imaging techniques, the model can capture both the fine-grained details and the broader context of the medical images, allowing it to make accurate diagnoses even with limited data.

4.4. Robotics

In robotics, few-shot learning with multi-scale can be used to train robots to perform new tasks with only a few demonstrations. This is particularly useful because it can be time-consuming and expensive to collect large datasets of robot actions. By using multi-scale sensor data, the robot can capture both the fine-grained details and the broader context of its environment, allowing it to learn new tasks quickly and efficiently.

5. Benefits of Using Few-Shot Learning with Multi-Scale

The benefits of using few-shot learning with multi-scale are numerous and significant. These include improved efficiency, enhanced accuracy, increased adaptability, and reduced data requirements. By leveraging these benefits, organizations can develop more effective and efficient machine learning solutions.

5.1. Improved Efficiency in Learning

Few-shot learning with multi-scale significantly improves the efficiency of learning by reducing the amount of data required to train a model. This means that models can be developed and deployed more quickly, saving time and resources. The ability to learn from limited data also makes it possible to tackle tasks that were previously impractical due to data scarcity.

5.2. Enhanced Accuracy with Limited Data

By capturing information at different levels of detail, multi-scale analysis enhances the accuracy of few-shot learning models. This allows the model to make more informed decisions, even when dealing with limited data. The integration of fine-grained and coarse-grained features enables the model to handle variations in scale, orientation, and viewpoint, making it more robust and reliable.

5.3. Increased Adaptability to New Tasks

Few-shot learning with multi-scale increases the adaptability of models to new tasks by enabling them to quickly generalize from limited examples. This means that models can be easily adapted to new domains or scenarios without requiring extensive retraining. The ability to adapt quickly is particularly valuable in dynamic environments where new tasks and challenges arise frequently.

5.4. Reduced Data Requirements and Costs

One of the most significant benefits of few-shot learning with multi-scale is its ability to reduce data requirements and costs. By learning from limited data, organizations can avoid the expense and effort associated with collecting and labeling large datasets. This makes it possible to develop machine learning solutions in scenarios where data is scarce or expensive to obtain.

6. Challenges and Limitations of Few-Shot Learning with Multi-Scale

Despite its many benefits, few-shot learning with multi-scale also presents several challenges and limitations. These include the risk of overfitting, the need for careful feature engineering, the computational complexity of multi-scale analysis, and the potential for bias in limited datasets. Understanding these challenges is essential for developing effective and reliable few-shot learning solutions.

6.1. Overfitting to Limited Data

One of the primary challenges of few-shot learning is the risk of overfitting to the limited available data. Overfitting occurs when the model learns the training data too well, resulting in poor generalization to new, unseen examples. This can be mitigated through techniques such as regularization, data augmentation, and transfer learning.

6.2. Complexity of Feature Engineering

Effective feature engineering is crucial for the success of few-shot learning with multi-scale. However, designing and selecting the right features can be a complex and time-consuming process. It requires a deep understanding of the data and the underlying task, as well as expertise in feature extraction and selection techniques.

6.3. Computational Demands

Multi-scale analysis can be computationally demanding, particularly when dealing with large datasets or complex models. The need to process data at multiple scales can significantly increase the computational overhead, requiring specialized hardware and optimized algorithms.

6.4. Bias Amplification

Limited datasets may contain biases that can be amplified by few-shot learning models. This can lead to unfair or discriminatory outcomes, particularly in applications such as image recognition and natural language processing. It is essential to carefully analyze the data for potential biases and implement mitigation strategies to ensure fairness and equity.

7. Tools and Resources for Implementing Few-Shot Learning with Multi-Scale

Several tools and resources are available to help implement few-shot learning with multi-scale. These include popular machine learning frameworks such as TensorFlow and PyTorch, as well as specialized libraries and datasets for few-shot learning. Leveraging these tools and resources can significantly accelerate the development and deployment of few-shot learning solutions.

7.1. TensorFlow

TensorFlow is a widely used open-source machine learning framework developed by Google. It provides a comprehensive set of tools and libraries for building and training machine learning models, including support for few-shot learning and multi-scale analysis. TensorFlow’s flexible architecture and extensive documentation make it a popular choice for researchers and practitioners alike.

7.2. PyTorch

PyTorch is another popular open-source machine learning framework that is particularly well-suited for research and development. It offers a dynamic computational graph and a rich set of tools for building and training neural networks, including support for few-shot learning and multi-scale analysis. PyTorch’s intuitive interface and active community make it a favorite among researchers and developers.

7.3. Meta-Dataset

Meta-Dataset is a large-scale dataset specifically designed for meta-learning and few-shot learning research. It includes a diverse set of tasks and domains, allowing researchers to evaluate the generalization capabilities of their models. Meta-Dataset is a valuable resource for developing and testing new few-shot learning algorithms.

7.4. Open-Source Libraries

Several open-source libraries provide specialized tools and functions for few-shot learning and multi-scale analysis. These libraries include implementations of popular few-shot learning algorithms, as well as utilities for feature extraction, data augmentation, and model evaluation. Leveraging these libraries can significantly simplify the development process and improve the performance of few-shot learning models.

8. Best Practices for Few-Shot Learning with Multi-Scale

To achieve the best results with few-shot learning with multi-scale, it is important to follow certain best practices. These include careful data preprocessing, effective feature engineering, appropriate model selection, and rigorous evaluation. By adhering to these best practices, organizations can maximize the performance and reliability of their few-shot learning solutions.

8.1. Data Preprocessing and Augmentation

Data preprocessing and augmentation are essential steps for improving the performance of few-shot learning models. Preprocessing involves cleaning and transforming the data to make it more suitable for training, while augmentation involves creating additional training examples by applying various transformations to the existing data. Common data augmentation techniques include rotation, scaling, cropping, and flipping.

8.2. Feature Selection and Extraction

Effective feature selection and extraction are crucial for capturing the most relevant information from the data. This involves selecting a subset of features that are most predictive of the target variable and extracting new features that capture the underlying structure and patterns in the data. Techniques such as principal component analysis (PCA), linear discriminant analysis (LDA), and autoencoders can be used for feature selection and extraction.

8.3. Model Selection and Hyperparameter Tuning

Choosing the right model and tuning its hyperparameters are critical for achieving the best results with few-shot learning. This involves experimenting with different models and hyperparameters to find the combination that yields the best performance on the validation set. Techniques such as grid search, random search, and Bayesian optimization can be used for hyperparameter tuning.

8.4. Evaluation and Validation Techniques

Rigorous evaluation and validation are essential for ensuring the reliability and generalizability of few-shot learning models. This involves evaluating the model’s performance on a held-out test set and using techniques such as cross-validation to estimate its generalization error. It is also important to evaluate the model’s performance on different subsets of the data to identify potential biases and limitations.

9. Future Trends in Few-Shot Learning with Multi-Scale

The field of few-shot learning with multi-scale is rapidly evolving, with new techniques and applications emerging all the time. Some of the key trends include the development of more sophisticated meta-learning algorithms, the integration of multi-modal data, and the application of few-shot learning to new domains. By staying abreast of these trends, organizations can position themselves to take advantage of the latest advances in this exciting field.

9.1. Advances in Meta-Learning

Meta-learning is a key enabler of few-shot learning, and researchers are constantly developing new and improved meta-learning algorithms. Some of the promising areas of research include meta-learning with attention mechanisms, meta-learning with memory modules, and meta-learning with Bayesian inference. These advances are expected to further improve the performance and adaptability of few-shot learning models.

9.2. Integration of Multi-Modal Data

Integrating multi-modal data, such as images, text, and audio, can significantly enhance the performance of few-shot learning models. By combining information from different modalities, the model can gain a more comprehensive understanding of the data and make more accurate predictions. This is particularly useful in applications such as robotics, where the robot needs to integrate information from multiple sensors to understand its environment.

9.3. Applications in New Domains

Few-shot learning is being applied to an increasingly wide range of domains, including healthcare, finance, and education. In healthcare, few-shot learning can be used to diagnose rare diseases and personalize treatment plans. In finance, it can be used to detect fraud and predict market trends. In education, it can be used to personalize learning experiences and assess student performance.

10. Conclusion: Embracing Few-Shot Learning with Multi-Scale

Few-shot learning with multi-scale offers a powerful approach to addressing the challenges of learning from limited data. By leveraging the concepts of meta-learning, representation learning, and multi-scale analysis, organizations can develop more efficient, accurate, and adaptable machine learning solutions. While there are challenges and limitations to consider, the benefits of few-shot learning with multi-scale are numerous and significant. By embracing this innovative approach and following best practices, organizations can unlock the full potential of machine learning in data-constrained environments.

Are you eager to explore more about few-shot learning with multi-scale and its potential applications? Visit LEARNS.EDU.VN today to discover in-depth articles, courses, and resources tailored to your learning needs. Unlock the power of efficient learning and transform your approach to machine learning.

Contact us:

- Address: 123 Education Way, Learnville, CA 90210, United States

- WhatsApp: +1 555-555-1212

- Website: learns.edu.vn

Frequently Asked Questions (FAQs)

Q1: What is few-shot learning?

Few-shot learning is a type of machine learning where the model learns to recognize and classify new objects or patterns with only a few examples, contrasting with traditional machine learning’s need for large datasets.

Q2: How does multi-scale analysis enhance few-shot learning?

Multi-scale analysis allows the model to capture information at different levels of detail, integrating both fine-grained features and broader context, thereby enhancing robustness and accuracy with limited data.

Q3: What are the core concepts of few-shot learning with multi-scale?

The core concepts include meta-learning (learning to learn), representation learning (extracting meaningful features), and multi-scale representation learning (capturing information at different levels).

Q4: What are some methodologies used in few-shot learning with multi-scale?

Methodologies include metric-based learning (measuring similarity), model-based learning (designing adaptive models), optimization-based learning (fine-tuning for rapid adaptation), and multi-scale convolutional neural networks (CNNs).

Q5: What are the practical applications of few-shot learning with multi-scale?

Practical applications include image recognition, natural language processing, medical diagnosis, and robotics, where learning from limited data is crucial.

Q6: What are the benefits of using few-shot learning with multi-scale?

Benefits include improved learning efficiency, enhanced accuracy with limited data, increased adaptability to new tasks, and reduced data requirements and costs.

Q7: What are the challenges and limitations of few-shot learning with multi-scale?

Challenges include overfitting to limited data, complexity of feature engineering, high computational demands, and the potential for bias amplification.

Q8: What tools and resources are available for implementing few-shot learning with multi-scale?

Tools include TensorFlow, PyTorch, Meta-Dataset, and various open-source libraries providing specialized functions for few-shot learning.

Q9: What are the best practices for few-shot learning with multi-scale?

Best practices include careful data preprocessing and augmentation, effective feature selection and extraction, appropriate model selection and hyperparameter tuning, and rigorous evaluation and validation techniques.

Q10: What are the future trends in few-shot learning with multi-scale?

Future trends include advances in meta-learning, the integration of multi-modal data, and the application of few-shot learning to new domains such as healthcare, finance, and education.

## **1. Introduction to Few-Shot Learning with Multi-Scale**### **1.1. Understanding the Basics of Few-Shot Learning**### **2.2. Representation Learning: Extracting Meaningful Features**### **3.4. Multi-Scale Convolutional Neural Networks (CNNs)**