How Do Neurons Learn? This question is fundamental to understanding how we acquire knowledge, skills, and adapt to our environment. At LEARNS.EDU.VN, we explore the fascinating world of neuronal learning, diving deep into the mechanisms that allow our brains to change and evolve. Discover how predictive learning rules, contrastive Hebbian learning, and other cutting-edge research can improve your understanding of neural learning. Explore the insights on synaptic plasticity, neural networks, and computational neuroscience waiting for you.

1. Understanding Neuronal Learning: An Introduction

Neuronal learning refers to the process by which neurons modify their connections and responses over time, enabling the brain to adapt and acquire new information. This remarkable ability, known as neuroplasticity, is the foundation of learning and memory. Neurons, the fundamental units of the brain, communicate through synapses, and the strength of these connections can change based on experience.

1.1. The Essence of Neuroplasticity

Neuroplasticity is the brain’s capacity to reorganize itself by forming new neural connections throughout life. This allows neurons to adjust their activities in response to new situations or changes in their environment. Neuroplasticity is crucial for:

- Learning New Skills: Acquiring a new language, playing a musical instrument, or mastering a sport.

- Memory Formation: Encoding and storing experiences.

- Recovery from Brain Injury: Reassigning functions to undamaged areas of the brain.

- Adapting to Sensory Changes: Adjusting to vision loss or hearing impairment.

Neuroplasticity enables the brain to rewire itself, making learning and adaptation possible throughout the lifespan.

1.2. Synaptic Plasticity: The Cellular Mechanism

Synaptic plasticity is the cellular basis of learning and memory. It refers to the ability of synapses to strengthen or weaken over time in response to increases or decreases in their activity. Two primary forms of synaptic plasticity are:

- Long-Term Potentiation (LTP): A persistent strengthening of synapses based on recent patterns of activity. LTP is widely considered a key mechanism for learning and memory.

- Long-Term Depression (LTD): A long-lasting decrease in the strength of synapses that occurs when the presynaptic neuron is active and the postsynaptic neuron is weakly activated or not activated at all.

These processes modify the efficiency of synaptic transmission, allowing the brain to encode and store information.

2. The Predictive Learning Rule: A Novel Approach

Recent research has introduced the predictive learning rule, a novel approach to understanding how neurons learn by predicting future activity. This rule suggests that neurons adjust their connections based on the difference between their predicted and actual activity, allowing them to optimize their responses to stimuli.

2.1. Core Principles of the Predictive Learning Rule

The predictive learning rule is inspired by sensory processing in the cortex, where initial bottom-up activity is followed by top-down modulation. The algorithm involves two phases:

- Free Phase (Bottom-Up Activity): Initial stimulus-driven activity reflecting visual attributes.

- Clamped Phase (Top-Down Modulation): Network output is clamped, representing abstract information or categorization.

The key insight is that the initial bottom-up activity allows neurons to predict the steady-state activity of the free phase. The mismatch between the predicted free phase and the clamped phase is then used as a teaching signal.

2.2. Mathematical Formulation

The synaptic weight update in the predictive learning rule is given by:

$${Delta {w_{ij}}} = {alpha ({hat x_i}{hat x_j} – {tilde x_i}{tilde x_j})}.$$

Where:

- ({Delta {w_{ij}}}) is the change in synaptic weight between neurons i and j.

- ({alpha}) is the learning rate.

- ({hat x_i}) and ({hat x_j}) are the actual activities of neurons i and j in the clamped phase.

- ({tilde x_i}) and ({tilde x_j}) are the predicted activities of neurons i and j in the free phase.

To make this rule more biologically plausible, the equation is simplified to:

$${Delta {w}_{ij}} = {alpha ({hat {x}_{i}}{hat {x}_{j}} – {hat {x}_{i}}{tilde {x}_{j}})} = {alpha {hat {x}_{i}}({hat {x}_{j}} – {tilde {x}_{j}})}.$$

This simplified rule allows a neuron to compare its actual activity with its predicted activity and adjust synaptic weights accordingly.

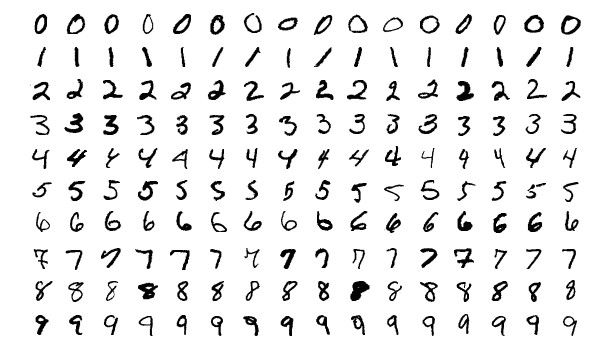

2.3. Validation Through Neural Network Simulations

To validate the predictive learning rule, simulations were performed using a neural network trained on the MNIST dataset for handwritten digit recognition. The network, comprising 784 input units, 1,000 hidden units, and 10 output units, achieved a 1.9% error rate, comparable to networks trained with backpropagation.

MNIST Handwritten Digits

MNIST Handwritten Digits

Alt Text: Examples of handwritten digits from the MNIST dataset used in neural network training.

The simulations demonstrated that neurons could accurately predict future free-phase activity. For example, after training, the network’s output neurons converged to a steady state that closely matched the predicted activity. Hidden units also exhibited predictable dynamics, further supporting the effectiveness of the predictive learning rule.

2.4. Biologically Motivated Network Architectures

The predictive learning rule was further tested in biologically motivated network architectures, including:

- Networks with Excitatory and Inhibitory Neurons: Simulating Dale’s law, where 80% of hidden neurons were excitatory and 20% were inhibitory.

- Networks without Symmetric Weights: Achieving performance similar to the original network.

- Networks with Spiking Neurons: Demonstrating the rule’s applicability to more realistic neuronal models.

- Deep Convolutional Networks: Achieving comparable performance to backpropagation through time (BPTT) on the CIFAR-10 dataset.

These tests highlight the robustness and versatility of the predictive learning rule in diverse neural network configurations.

2.5. Empirical Evidence from Animal Studies

Real-world validation was obtained through neuronal recordings from the auditory cortex of awake rats. The study involved presenting six tones repeatedly and analyzing the neuronal responses. The results showed:

- Neurons have predictable dynamics.

- Future activity can be estimated from initial neuronal responses.

- Long-term changes in neuronal firing rates correlate with differences between evoked and predicted activity.

These findings provide strong empirical support for the predictive learning rule in biological systems.

3. Contrastive Hebbian Learning: A Comparative Perspective

Contrastive Hebbian Learning (CHL) is another significant approach to neural learning, offering a unique perspective on how synaptic connections are adjusted based on neural activity. Unlike the predictive learning rule, CHL involves two distinct phases: the free phase and the clamped phase.

3.1. The Two Phases of Contrastive Hebbian Learning

CHL operates through two primary phases:

- Free Phase: The network processes input freely, allowing activity to propagate through the network.

- Clamped Phase: The output neurons are clamped to the desired target values, providing a teaching signal.

The synaptic weight update is determined by the difference in activity patterns between these two phases.

3.2. Mathematical Representation

The weight update rule for CHL is given by:

$${Delta w_{ij} = eta (x_i^+ x_j^+ – x_i^- x_j^-)}$$

Where:

- ({Delta w_{ij}}) is the change in the synaptic weight between neurons i and j.

- ({eta}) is the learning rate.

- (x_i^+) and (x_j^+) are the activities of neurons i and j in the clamped phase.

- (x_i^-) and (x_j^-) are the activities of neurons i and j in the free phase.

This rule strengthens connections between neurons that are active together in the clamped phase and weakens connections between neurons that are active together in the free phase.

3.3. Strengths and Limitations

CHL offers several advantages, including:

- Locality: Weight updates depend only on the activities of pre- and post-synaptic neurons.

- Biological Plausibility: CHL aligns with Hebbian learning principles observed in biological systems.

However, CHL also has limitations:

- Two-Phase Requirement: The need for two distinct phases may not be biologically realistic.

- Convergence Issues: Ensuring the network converges to a stable equilibrium can be challenging.

3.4. Comparison with Predictive Learning Rule

The predictive learning rule addresses some limitations of CHL by combining both activity phases into one, inspired by sensory processing in the cortex. This approach eliminates the need for separate free and clamped phases, making it more biologically plausible. Additionally, the predictive learning rule has shown robust performance in various network architectures and real-world data, suggesting it is a powerful alternative to CHL.

4. The Role of Spontaneous Activity in Learning

Spontaneous brain activity, which occurs in the absence of external stimuli, plays a crucial role in shaping neuronal dynamics and facilitating learning. This intrinsic activity, often observed during sleep, shares similarities with stimulus-evoked activity and can influence how neurons respond to new experiences.

4.1. Characteristics of Spontaneous Activity

Spontaneous activity is characterized by:

- Packet-Based Communication: Neuronal activity occurs in bursts called packets, lasting approximately 50-300 ms.

- Resemblance to Evoked Patterns: Spontaneous packets resemble patterns evoked by external stimuli.

- High Energy Consumption: Spontaneous activity accounts for over 90% of brain energy consumption.

These features suggest that spontaneous activity is not random noise but rather an organized process that contributes to brain function.

4.2. Predictive Models from Spontaneous Activity

Research has shown that spontaneous activity can be used to predict neuronal dynamics during stimulus presentation. By extracting spontaneous packets and analyzing their patterns, it is possible to build predictive models that estimate how neurons will respond to external stimuli.

Alt Text: Illustration of spontaneous brain activity patterns in the auditory cortex.

4.3. Implications for Learning

The ability to predict stimulus-evoked responses from spontaneous activity has significant implications for learning:

- Training Data: Spontaneous activity provides training data for neurons to build predictive models.

- Internal Models: Neurons develop internal models of the environment based on intrinsic activity patterns.

- Enhanced Learning: The brain can better anticipate and respond to new stimuli by leveraging spontaneous activity.

These findings highlight the importance of spontaneous activity in shaping neuronal learning and adaptation.

5. Energy Efficiency and Learning: The Lazy Neuron Principle

Neurons consume a significant amount of energy to maintain electrical activity and synaptic transmission. Recent theories suggest that neurons optimize their energy usage by adhering to the “lazy neuron principle,” which posits that neurons aim to maximize their impact while minimizing their activity.

5.1. Energy Consumption in Neurons

The primary energy consumers in neurons are:

- Synaptic Potentials: Accounting for approximately 50% of ATP usage.

- Action Potentials: Accounting for about 20% of ATP usage.

- Housekeeping Functions: Accounting for the remaining 30% of energy consumption.

To balance energy supply and demand, neurons adjust their synaptic connections and activity patterns.

5.2. Maximizing Energy Balance

The energy balance of a neuron can be expressed as:

$${E}_{j} = {- varepsilon – {b}_{1}left(mathop {sum}nolimits_{i} {{w}_{ij}{x}_{i}} right)^{beta _{1}} + {b}_{2}left(mathop {sum}nolimits_{k} {{x}_{k}} right)^{beta _{2}}}.$$

Where:

- (E_j) is the energy balance of neuron j.

- ({varepsilon}) represents energy consumed by housekeeping functions.

- The second term represents energy consumption for electrical activity.

- The third term represents energy supply through neurovascular coupling.

To maximize energy balance, a neuron must minimize its electrical activity while maximizing its impact on other neurons’ activities.

5.3. The Lazy Neuron Principle

The “lazy neuron principle” encapsulates this balance, stating that neurons strive for:

- Maximum Impact: Exerting a strong influence on downstream neurons.

- Minimum Activity: Consuming as little energy as possible.

This principle suggests that neurons learn to optimize their synaptic weights to achieve the greatest effect with the least expenditure of energy.

5.4. Implications for Neural Learning

The lazy neuron principle has profound implications for understanding neural learning:

- Efficient Coding: Neurons learn to represent information efficiently, minimizing redundancy.

- Synaptic Optimization: Synaptic weights are adjusted to maximize energy balance.

- Adaptive Strategies: Neurons adapt their activity patterns to optimize energy usage.

These insights highlight the importance of energy efficiency in shaping neural learning and function.

6. Practical Applications and Future Directions

Understanding how neurons learn has significant implications for various fields, including artificial intelligence, education, and neuroscience. By leveraging insights from predictive learning, contrastive Hebbian learning, and other learning rules, we can develop more effective learning algorithms, educational strategies, and therapeutic interventions.

6.1. Artificial Intelligence

In artificial intelligence, understanding neuronal learning can lead to:

- More Efficient Algorithms: Developing learning algorithms that mimic the brain’s energy efficiency.

- Robust Neural Networks: Creating neural networks that can adapt and learn in dynamic environments.

- Biologically Inspired Systems: Designing AI systems that are more aligned with biological learning mechanisms.

6.2. Education

In education, insights from neuronal learning can inform:

- Personalized Learning Strategies: Tailoring educational approaches to individual learning styles.

- Effective Teaching Methods: Implementing teaching methods that optimize synaptic plasticity and memory formation.

- Enhanced Cognitive Training: Developing cognitive training programs that leverage neuroplasticity to improve cognitive function.

6.3. Neuroscience and Medicine

In neuroscience and medicine, understanding neuronal learning can contribute to:

- Therapeutic Interventions: Developing therapies to promote recovery from brain injury and neurological disorders.

- Cognitive Enhancement: Creating interventions to improve cognitive function and prevent age-related cognitive decline.

- Neuroplasticity Research: Advancing our understanding of the brain’s capacity to adapt and learn throughout life.

6.4. Future Research Directions

Future research directions in neuronal learning include:

- Exploring the Role of Glial Cells: Investigating how glial cells contribute to synaptic plasticity and learning.

- Studying the Impact of Neuromodulators: Understanding how neuromodulators influence neuronal learning and memory.

- Developing Advanced Imaging Techniques: Creating new imaging techniques to visualize neuronal activity and synaptic changes in real-time.

7. Key Takeaways and Resources at LEARNS.EDU.VN

Understanding how neurons learn is essential for anyone interested in the brain, learning, and artificial intelligence. The predictive learning rule, contrastive Hebbian learning, and the role of spontaneous activity offer valuable insights into the mechanisms underlying neuroplasticity and adaptation.

7.1. Summary of Key Concepts

- Neuroplasticity: The brain’s ability to reorganize itself by forming new neural connections.

- Synaptic Plasticity: The capacity of synapses to strengthen or weaken over time.

- Predictive Learning Rule: A novel approach where neurons adjust connections based on predicted vs. actual activity.

- Contrastive Hebbian Learning: A learning rule that strengthens connections between neurons active together during the clamped phase and weakens connections during the free phase.

- Spontaneous Activity: Intrinsic brain activity that plays a crucial role in shaping neuronal dynamics.

- Lazy Neuron Principle: Neurons aim to maximize their impact while minimizing their activity.

7.2. Resources at LEARNS.EDU.VN

At LEARNS.EDU.VN, we offer a wealth of resources to help you explore these concepts further. Visit our website to find:

- Detailed Articles: In-depth explanations of neuronal learning mechanisms.

- Expert Insights: Perspectives from leading neuroscientists and educators.

- Practical Tips: Strategies to enhance your learning and cognitive function.

- Interactive Courses: Engage in courses designed to give you hands-on experience and deeper insight.

8. Conclusion: Empowering Learning Through Knowledge

Understanding how neurons learn is a journey into the heart of intelligence and adaptation. By embracing the latest research and innovative approaches, we can unlock the full potential of the brain and transform the way we learn, teach, and interact with the world. Visit LEARNS.EDU.VN to discover more and embark on your own journey of discovery.

8.1. Encouragement and Call to Action

Ready to dive deeper into the world of neuronal learning? LEARNS.EDU.VN offers a range of resources to support your journey. Explore our articles, courses, and expert insights to unlock the secrets of brain plasticity and enhance your cognitive potential.

8.2. Contact Information

For more information or to get in touch with our team, please visit our website or contact us at:

Address: 123 Education Way, Learnville, CA 90210, United States

WhatsApp: +1 555-555-1212

Website: LEARNS.EDU.VN

Join us at LEARNS.EDU.VN and discover how understanding neuronal learning can empower you to achieve your learning goals.

9. Frequently Asked Questions (FAQs) About Neuronal Learning

9.1. What is neuronal learning?

Neuronal learning is the process by which neurons in the brain modify their connections and responses over time, allowing the brain to adapt and acquire new information.

9.2. What is neuroplasticity?

Neuroplasticity is the brain’s ability to reorganize itself by forming new neural connections throughout life, enabling adaptation and learning.

9.3. What is synaptic plasticity?

Synaptic plasticity is the ability of synapses to strengthen or weaken over time in response to increases or decreases in their activity, serving as the cellular basis of learning and memory.

9.4. What is Long-Term Potentiation (LTP)?

LTP is a persistent strengthening of synapses based on recent patterns of activity, widely considered a key mechanism for learning and memory.

9.5. What is Long-Term Depression (LTD)?

LTD is a long-lasting decrease in the strength of synapses that occurs when the presynaptic neuron is active and the postsynaptic neuron is weakly activated or not activated at all.

9.6. What is the predictive learning rule?

The predictive learning rule is a novel approach where neurons adjust their connections based on the difference between their predicted and actual activity, optimizing their responses to stimuli.

9.7. What is contrastive Hebbian learning (CHL)?

CHL is a learning rule that adjusts synaptic connections based on neural activity during two phases: the free phase and the clamped phase.

9.8. What is the role of spontaneous activity in learning?

Spontaneous activity, occurring in the absence of external stimuli, plays a crucial role in shaping neuronal dynamics and facilitating learning by providing training data for neurons.

9.9. What is the “lazy neuron principle”?

The “lazy neuron principle” posits that neurons aim to maximize their impact on downstream neurons while minimizing their own activity and energy consumption.

9.10. How can I learn more about neuronal learning?

Visit LEARNS.EDU.VN to access detailed articles, expert insights, practical tips, and interactive courses on neuronal learning.

By understanding these fundamental principles and exploring the resources available at learns.edu.vn, you can gain a deeper appreciation for the remarkable ability of the brain to learn and adapt.