Are you intrigued by the groundbreaking advancements in artificial intelligence? What Is Transformer In Machine Learning? Discover how this innovative neural network architecture is revolutionizing the field! At LEARNS.EDU.VN, we aim to provide comprehensive insights into transformer models, their applications, and their impact on various industries. Explore the intricacies of attention mechanisms and how they enable machines to understand context in sequential data. Enhance your knowledge with our expert guides and unlock the potential of AI.

1. Understanding Transformer Models: The Basics

Transformer models have emerged as a dominant force in the realm of machine learning, particularly in natural language processing (NLP). But what exactly is a transformer model? At its core, a transformer model is a neural network architecture that leverages self-attention mechanisms to process sequential data, such as text, audio, and video. Unlike traditional recurrent neural networks (RNNs) and convolutional neural networks (CNNs), transformers can process entire sequences in parallel, leading to significant improvements in training speed and performance.

1.1 The Genesis of Transformers

The transformer architecture was first introduced in the groundbreaking 2017 paper, “Attention is All You Need,” by Vaswani et al. from Google Research. This paper marked a paradigm shift in the field of NLP, as it demonstrated the power of attention mechanisms in capturing long-range dependencies within sequences. The transformer architecture has since become the foundation for many state-of-the-art NLP models, including BERT, GPT, and T5.

1.2 Key Components of a Transformer Model

A transformer model consists of several key components that work together to process and understand sequential data:

-

Input Embedding: Converts input tokens (e.g., words) into vector representations.

-

Positional Encoding: Adds information about the position of tokens in the sequence, as transformers are inherently order-agnostic.

-

Encoder: Processes the input sequence and generates a contextualized representation.

-

Decoder: Generates the output sequence based on the encoded representation.

-

Attention Mechanism: Enables the model to focus on relevant parts of the input sequence when processing each token.

1.3 The Power of Self-Attention

The self-attention mechanism is the heart of the transformer architecture. It allows the model to weigh the importance of different parts of the input sequence when processing each token. This is achieved by calculating attention scores between each pair of tokens, which are then used to weight the corresponding token representations.

Self-attention enables the model to capture long-range dependencies and understand the context of each token within the sequence. This is particularly important in NLP tasks, where the meaning of a word can depend on its surrounding words and the overall context of the sentence.

1.4 Advantages of Transformer Models

Transformer models offer several advantages over traditional RNNs and CNNs:

-

Parallel Processing: Transformers can process entire sequences in parallel, leading to significant improvements in training speed.

-

Long-Range Dependencies: Self-attention allows transformers to capture long-range dependencies within sequences, which is crucial for understanding context.

-

Scalability: Transformers can be scaled to handle large datasets and complex tasks, making them suitable for a wide range of applications.

-

Versatility: Transformers can be applied to various data types, including text, audio, and video, making them a versatile tool for machine learning.

2. The Architecture of Transformer Models: A Detailed Look

To fully appreciate the power of transformer models, it is essential to understand their underlying architecture. The transformer architecture consists of two main components: the encoder and the decoder.

2.1 The Encoder: Processing the Input Sequence

The encoder is responsible for processing the input sequence and generating a contextualized representation. It consists of multiple layers, each containing two sub-layers:

-

Multi-Head Self-Attention: This sub-layer applies the self-attention mechanism to the input sequence, allowing the model to weigh the importance of different parts of the sequence when processing each token.

-

Feed-Forward Network: This sub-layer applies a feed-forward neural network to each token representation, further processing and refining the contextualized representation.

Each sub-layer is followed by a residual connection and layer normalization, which helps to stabilize training and improve performance.

2.2 The Decoder: Generating the Output Sequence

The decoder is responsible for generating the output sequence based on the encoded representation. It also consists of multiple layers, each containing three sub-layers:

-

Masked Multi-Head Self-Attention: This sub-layer applies the self-attention mechanism to the output sequence, but with a mask that prevents the model from attending to future tokens. This is necessary to ensure that the model only uses information from the past when generating each token.

-

Multi-Head Attention: This sub-layer attends to the output of the encoder, allowing the decoder to incorporate information from the input sequence when generating the output sequence.

-

Feed-Forward Network: This sub-layer applies a feed-forward neural network to each token representation, further processing and refining the contextualized representation.

Similar to the encoder, each sub-layer is followed by a residual connection and layer normalization.

2.3 Positional Encoding: Injecting Order Information

Since transformers process sequences in parallel, they are inherently order-agnostic. To address this, positional encoding is used to add information about the position of tokens in the sequence.

Positional encoding is typically implemented by adding a vector to each token embedding that encodes its position in the sequence. This vector can be learned or fixed, but the key is that it provides the model with information about the order of tokens in the sequence.

2.4 Multi-Head Attention: Capturing Diverse Relationships

The multi-head attention mechanism is a crucial component of the transformer architecture. It allows the model to attend to different aspects of the input sequence simultaneously, capturing diverse relationships between tokens.

In multi-head attention, the input sequence is transformed into multiple sets of queries, keys, and values. Each set is then used to calculate attention scores and generate a weighted representation of the input sequence. The results from each set are then concatenated and transformed to produce the final output.

By using multiple attention heads, the model can capture a wider range of relationships between tokens, leading to improved performance.

3. Applications of Transformer Models: Transforming Industries

Transformer models have found widespread applications across various industries, revolutionizing the way we interact with technology and information. Their ability to process sequential data with exceptional accuracy and efficiency has made them indispensable tools for a wide range of tasks.

3.1 Natural Language Processing (NLP): The Reign of Transformers

NLP is arguably the area where transformer models have had the most significant impact. They have become the go-to architecture for a wide range of NLP tasks, including:

-

Machine Translation: Transformer models have achieved state-of-the-art results in machine translation, enabling accurate and fluent translation between languages.

-

Text Summarization: Transformers can generate concise and informative summaries of long documents, saving time and effort for readers.

-

Question Answering: Transformers can answer questions based on a given context, providing accurate and relevant information.

-

Sentiment Analysis: Transformers can analyze text to determine the sentiment expressed, which is useful for understanding customer opinions and brand perception.

-

Text Generation: Transformers can generate realistic and coherent text, which is useful for creating chatbots, writing articles, and more.

3.2 Computer Vision: A New Perspective

While transformers were initially designed for NLP tasks, they have also shown promising results in computer vision. By treating images as sequences of patches, transformers can be adapted to perform tasks such as:

-

Image Classification: Transformers can classify images into different categories, achieving state-of-the-art results on benchmark datasets.

-

Object Detection: Transformers can detect and locate objects within images, which is useful for tasks such as autonomous driving and surveillance.

-

Image Segmentation: Transformers can segment images into different regions, which is useful for tasks such as medical image analysis and satellite imagery analysis.

3.3 Speech Recognition: Understanding Human Voices

Transformer models have also been successfully applied to speech recognition, enabling accurate and efficient transcription of spoken language. They can process audio sequences and convert them into text, which is useful for tasks such as:

-

Voice Assistants: Transformers power voice assistants like Siri and Alexa, enabling them to understand and respond to user commands.

-

Transcription Services: Transformers can transcribe audio and video recordings, which is useful for creating subtitles, generating meeting minutes, and more.

-

Speech Synthesis: Transformers can generate realistic and natural-sounding speech, which is useful for creating voiceovers, generating audiobooks, and more.

3.4 Healthcare: Revolutionizing Medical Practices

Transformers are making significant strides in healthcare, enabling new and improved medical practices. They can be used for tasks such as:

-

Medical Image Analysis: Transformers can analyze medical images to detect diseases, diagnose conditions, and monitor treatment progress.

-

Drug Discovery: Transformers can analyze molecular structures and predict the efficacy of new drugs, accelerating the drug discovery process.

-

Personalized Medicine: Transformers can analyze patient data to personalize treatment plans and improve patient outcomes.

3.5 Finance: Enhancing Financial Services

Transformers are also being used in the finance industry to enhance financial services and improve decision-making. They can be used for tasks such as:

-

Fraud Detection: Transformers can detect fraudulent transactions by analyzing patterns in financial data.

-

Risk Assessment: Transformers can assess the risk of lending to borrowers by analyzing their financial history and creditworthiness.

-

Algorithmic Trading: Transformers can develop and execute trading strategies based on market data, improving investment returns.

4. Training Transformer Models: A Step-by-Step Guide

Training transformer models can be a complex and computationally intensive process, but with the right approach and tools, it can be achieved effectively. Here is a step-by-step guide to training transformer models:

4.1 Data Preparation: The Foundation of Success

The first step in training a transformer model is to prepare the data. This involves collecting, cleaning, and preprocessing the data to make it suitable for training.

-

Data Collection: Gather a large and diverse dataset that is representative of the task you want to perform.

-

Data Cleaning: Remove noise, errors, and inconsistencies from the data.

-

Data Preprocessing: Tokenize the text, convert it to lowercase, and remove punctuation.

4.2 Model Selection: Choosing the Right Architecture

The next step is to select the appropriate transformer architecture for your task. There are many different transformer architectures available, each with its own strengths and weaknesses.

-

BERT: A popular transformer architecture for NLP tasks such as text classification and question answering.

-

GPT: A transformer architecture for text generation tasks such as chatbot development and article writing.

-

T5: A transformer architecture that can perform a wide range of NLP tasks using a unified text-to-text format.

4.3 Hyperparameter Tuning: Optimizing Performance

Once you have selected a transformer architecture, you need to tune the hyperparameters to optimize performance. Hyperparameters are parameters that are not learned during training, but rather set before training begins.

-

Learning Rate: The learning rate controls how much the model updates its weights during each iteration of training.

-

Batch Size: The batch size controls how many data samples are processed in each iteration of training.

-

Number of Layers: The number of layers in the transformer architecture.

-

Number of Attention Heads: The number of attention heads in the multi-head attention mechanism.

4.4 Training Process: Iterative Learning

The training process involves iteratively feeding the data to the model and updating the weights based on the error between the predicted output and the actual output.

-

Forward Pass: Feed the input data to the model and generate a prediction.

-

Loss Calculation: Calculate the loss between the predicted output and the actual output.

-

Backward Pass: Calculate the gradients of the loss with respect to the model weights.

-

Weight Update: Update the model weights based on the gradients and the learning rate.

4.5 Evaluation and Refinement: Ensuring Quality

After training the model, it is important to evaluate its performance on a held-out dataset. This will help you assess the model’s generalization ability and identify areas for improvement.

-

Accuracy: The percentage of correctly classified samples.

-

Precision: The percentage of correctly predicted positive samples out of all predicted positive samples.

-

Recall: The percentage of correctly predicted positive samples out of all actual positive samples.

-

F1-Score: The harmonic mean of precision and recall.

5. The Future of Transformer Models: Trends and Innovations

Transformer models have already revolutionized the field of machine learning, and their future looks even brighter. Researchers and engineers are constantly developing new techniques and innovations that are pushing the boundaries of what is possible with transformer models.

5.1 Larger Models: Scaling Up Performance

One of the most prominent trends in transformer research is the development of larger models with more parameters. These larger models have shown to achieve state-of-the-art results on a wide range of tasks.

-

GPT-3: A transformer model with 175 billion parameters that can generate realistic and coherent text.

-

Megatron-Turing NLG: A transformer model with 530 billion parameters that can answer questions, write articles, and generate code.

-

Switch Transformer: A transformer model with over 1 trillion parameters that uses a mixture-of-experts architecture to improve performance.

5.2 More Efficient Models: Optimizing Resource Usage

While larger models have shown to achieve better performance, they also require more computational resources to train and deploy. Researchers are therefore working on developing more efficient transformer models that can achieve similar performance with fewer resources.

-

Knowledge Distillation: A technique for training smaller models to mimic the behavior of larger models.

-

Model Pruning: A technique for removing unnecessary connections from a model to reduce its size and complexity.

-

Quantization: A technique for reducing the precision of model weights to reduce memory usage and improve inference speed.

5.3 Multimodal Transformers: Bridging the Gap Between Modalities

Another exciting trend in transformer research is the development of multimodal transformers that can process and integrate information from multiple modalities, such as text, images, and audio.

-

VisualBERT: A multimodal transformer that can process both text and images, enabling tasks such as image captioning and visual question answering.

-

SpeechBERT: A multimodal transformer that can process both text and audio, enabling tasks such as speech recognition and speech translation.

5.4 Explainable AI (XAI): Unveiling the Black Box

As transformer models become more complex and powerful, it is increasingly important to understand how they make decisions. Explainable AI (XAI) is a field of research that aims to develop techniques for making AI models more transparent and interpretable.

-

Attention Visualization: Visualizing the attention weights of a transformer model to understand which parts of the input sequence are most important for making a prediction.

-

Saliency Maps: Generating saliency maps that highlight the regions of an image that are most important for making a classification decision.

-

Rule Extraction: Extracting rules from a trained model to understand the logic behind its decisions.

5.5 Ethical Considerations: Ensuring Responsible AI

As transformer models are deployed in more and more applications, it is important to consider the ethical implications of their use. Researchers are working on developing techniques for mitigating bias, ensuring fairness, and promoting responsible AI.

-

Bias Detection: Developing techniques for detecting and mitigating bias in training data.

-

Fairness Metrics: Developing metrics for measuring the fairness of AI models.

-

Privacy-Preserving AI: Developing techniques for training and deploying AI models without compromising user privacy.

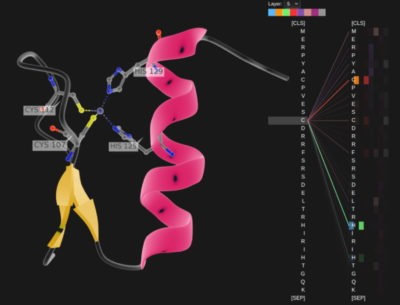

Transformer model applied to protein analysis

Transformer model applied to protein analysis

6. Transformer Models vs. Other Neural Networks: A Comparison

Transformer models have emerged as a dominant force in machine learning, but it’s essential to understand how they compare to other neural network architectures. Here’s a comparison of transformer models with recurrent neural networks (RNNs) and convolutional neural networks (CNNs):

6.1 Transformer Models vs. Recurrent Neural Networks (RNNs)

RNNs were the go-to architecture for processing sequential data before the advent of transformers. However, transformers offer several advantages over RNNs:

| Feature | Transformer Models | Recurrent Neural Networks (RNNs) |

|---|---|---|

| Parallel Processing | Can process entire sequences in parallel | Process sequences sequentially |

| Long-Range Deps. | Captures long-range dependencies with self-attention | Struggles with long-range dependencies |

| Training Speed | Faster training due to parallel processing | Slower training due to sequential processing |

| Scalability | Highly scalable to large datasets | Limited scalability |

| Complexity | More complex architecture | Simpler architecture |

| Vanishing Gradient | Less susceptible to vanishing gradient problem | More susceptible to vanishing gradient problem |

6.2 Transformer Models vs. Convolutional Neural Networks (CNNs)

CNNs are primarily used for image processing, but they can also be applied to sequential data. However, transformers offer some advantages over CNNs for certain tasks:

| Feature | Transformer Models | Convolutional Neural Networks (CNNs) |

|---|---|---|

| Data Type | Primarily for sequential data (text, audio, video) | Primarily for image data |

| Global Context | Captures global context with self-attention | Focuses on local patterns |

| Long-Range Deps | Excels at capturing long-range dependencies | Limited ability to capture long-range dependencies |

| Interpretability | Attention mechanisms provide some interpretability | Less interpretable |

6.3 When to Use Transformer Models

Transformer models are particularly well-suited for tasks that require:

- Processing sequential data with long-range dependencies.

- Capturing global context within the data.

- Parallel processing for faster training.

- Scalability to large datasets.

7. Overcoming Challenges in Transformer Models

While transformer models have achieved remarkable success, they also present certain challenges that researchers and engineers are actively working to address.

7.1 Computational Cost: Balancing Performance and Resources

Transformer models, especially large ones, can be computationally expensive to train and deploy. This cost can be a barrier to entry for researchers and organizations with limited resources.

-

Model Compression Techniques: Techniques like pruning, quantization, and knowledge distillation can reduce the size and complexity of transformer models, making them more efficient to deploy.

-

Hardware Acceleration: Specialized hardware like GPUs and TPUs can accelerate the training and inference of transformer models.

7.2 Data Requirements: The Need for Large Datasets

Transformer models typically require large amounts of training data to achieve optimal performance. This can be a challenge for tasks where data is scarce or expensive to obtain.

-

Data Augmentation: Techniques like back-translation, synonym replacement, and random insertion can be used to artificially increase the size of the training dataset.

-

Transfer Learning: Pre-training transformer models on large, publicly available datasets and then fine-tuning them on smaller, task-specific datasets can reduce the amount of data required for training.

7.3 Interpretability: Understanding Model Decisions

Transformer models can be difficult to interpret, making it challenging to understand how they make decisions. This lack of interpretability can be a concern for applications where transparency and accountability are important.

-

Attention Visualization: Visualizing the attention weights of a transformer model can provide insights into which parts of the input sequence are most important for making a prediction.

-

Explainable AI (XAI) Techniques: Techniques like LIME and SHAP can be used to explain the predictions of transformer models in a human-understandable way.

8. Practical Tips for Working with Transformer Models

Working with transformer models can be a rewarding but also challenging experience. Here are some practical tips to help you get the most out of transformer models:

8.1 Start with Pre-trained Models

Pre-trained transformer models like BERT, GPT, and T5 are readily available and can be fine-tuned for a wide range of tasks. Starting with a pre-trained model can save you a significant amount of time and resources.

8.2 Use a High-Level Library

Libraries like TensorFlow, PyTorch, and Hugging Face Transformers provide high-level APIs that make it easier to work with transformer models. These libraries handle many of the low-level details, allowing you to focus on the task at hand.

8.3 Monitor Training Progress

It’s important to monitor the training progress of your transformer model to ensure that it’s learning correctly. Monitor metrics like loss, accuracy, and F1-score to identify potential problems early on.

8.4 Experiment with Hyperparameters

Hyperparameters like learning rate, batch size, and number of layers can have a significant impact on the performance of your transformer model. Experiment with different hyperparameter settings to find the optimal configuration for your task.

8.5 Use Regularization Techniques

Regularization techniques like dropout and weight decay can help prevent overfitting, which can improve the generalization performance of your transformer model.

8.6 Evaluate on Multiple Metrics

Evaluate your transformer model on multiple metrics to get a comprehensive understanding of its performance. Don’t rely solely on accuracy, as it can be misleading in some cases.

9. Resources for Learning More About Transformer Models

If you’re interested in learning more about transformer models, here are some resources to get you started:

-

Research Papers: The original “Attention is All You Need” paper is a must-read for anyone interested in transformer models. Other important papers include BERT, GPT, and T5.

-

Online Courses: Platforms like Coursera, edX, and Udacity offer courses on deep learning and transformer models.

-

Tutorials and Blog Posts: Many websites and blogs offer tutorials and explanations of transformer models. The Hugging Face Transformers library also has excellent documentation and tutorials.

-

Books: Books like “Deep Learning” by Ian Goodfellow, Yoshua Bengio, and Aaron Courville provide a comprehensive introduction to deep learning, including transformer models.

10. Frequently Asked Questions (FAQs) About Transformer Models

Here are some frequently asked questions about transformer models:

-

What is a transformer model?

A transformer model is a neural network architecture that uses self-attention mechanisms to process sequential data. -

How do transformer models work?

Transformer models use self-attention to weigh the importance of different parts of the input sequence when processing each token. -

What are the advantages of transformer models?

Transformer models can process sequences in parallel, capture long-range dependencies, and scale to large datasets. -

What are the applications of transformer models?

Transformer models are used in NLP, computer vision, speech recognition, healthcare, finance, and more. -

How do I train a transformer model?

Training a transformer model involves data preparation, model selection, hyperparameter tuning, training, and evaluation. -

What are the challenges of transformer models?

Challenges include computational cost, data requirements, and interpretability. -

What is self-attention?

Self-attention is a mechanism that allows a model to weigh the importance of different parts of the input sequence when processing each token. -

What is positional encoding?

Positional encoding is a technique for adding information about the position of tokens in the sequence, as transformers are inherently order-agnostic. -

What is multi-head attention?

Multi-head attention allows the model to attend to different aspects of the input sequence simultaneously, capturing diverse relationships between tokens. -

What are some popular transformer models?

Popular transformer models include BERT, GPT, T5, and Transformer XL.

We hope this comprehensive guide has provided you with a deeper understanding of transformer models and their potential. At LEARNS.EDU.VN, we are committed to providing you with the latest insights and resources to help you succeed in your learning journey.

Are you ready to take your knowledge of machine learning to the next level? LEARNS.EDU.VN offers a wealth of resources, including detailed articles, expert tutorials, and comprehensive courses, all designed to help you master the intricacies of transformer models and other cutting-edge AI technologies. Whether you’re looking to build a new skill, enhance your career prospects, or simply satisfy your curiosity, LEARNS.EDU.VN has something for everyone. Visit our website at learns.edu.vn to explore our offerings and start your journey toward AI mastery today. Our team of experienced educators and industry experts is here to support you every step of the way. For inquiries, contact us at 123 Education Way, Learnville, CA 90210, United States, or reach out via Whatsapp at +1 555-555-1212.